MoneyMatters AI Coach

Measuring consumer trust in personal health records game-changer,

Independent Work (2026)

SITUATION

I wanted to explore how AI could support neurospicy adults (18-30) who struggle with financial literacy and habit-building.

Background

Neurospicy adults (18-30) who lack financial literacy often want financial stability but struggle with habit-building and workflow friction of personal finance apps: too many categories, too many screens, too many decisions, and unclear "what do I do next?" moments. At the same time, AI-assisted insights can reduce cognitive load if they're transparent, controllable, and non-judgemental. This case study explores a concept feature called Clarity Coach: an AI-assisted monthly money review that explains what changed, shows evidence, and helps users take one realistic next step to reinforce habit-building.

Goals

-

Identify the highest-friction points in "monthly review - insight - action" flows for neurospicy users.

-

Design and AI-assisted experience that increases speed to understanding without reducing trust or user agency.

-

Validate usability quantitatively: task success, time-on-task, errors, confidence, SUS, trust/agency measures.

-

Apply responsible AI patterns (transparency, uncertainty cues, user control, safe recommendations).

Challenges and Risks

-

Over-reliance risk: users may accept AI suggestions without understanding.

-

Wrong insight risk: incorrect categorization/anomaly explanations could erode trust quickly.

-

Shame/mental load risk: finance UX can trigger avoidance---tone and interaction design matter.

-

Privacy sensitivity: users may be wary of AI analyzing transactions.

-

Accessibility + neurodiversity: reduce overwhelm, support scanning, minimize steps, avoid dark patterns.

TASK

I owned end-to-end product strategy + UXR + UX design for a testable AI feature prototype.

Team

-

Me (Vanessa): Product Strategy + UXR + UXD + Responsible AI framing

-

Advisor/Reviewer: Faculty / Peer Designer / Engineer Friend

My Tasks

-

Defined target user + jobs-to-be-done and success metrics

-

Conducted baseline evaluation of existing patterns (competitive scan + heuristic review)

-

Mapped the key user journey: Monthly Review → Explanation → Next Action

-

Designed flows + IA + key screens and wrote AI interaction copy

-

Built a clickable prototype and test plan for quantitative usability evaluation

-

Synthesized findings into prioritized recommendations + KPI hypotheses

Tools

-

Figma (flows + prototype)

-

FigJam / Miro (mapping + synthesis)

-

Google Forms / Maze / UserTesting (unmoderated testing plan) (pick one)

-

Sheets (metrics, task scoring, charts)

-

Notion (research log + decisions)

Deliverables

-

Problem framing + JTBD + KPI hypothesis map

-

Competitive/heuristic findings summary

-

User flow + IA diagram

-

Clickable prototype (key screens)

-

Quant usability test plan (tasks, metrics, success criteria)

-

Findings summary + prioritized recommendations

-

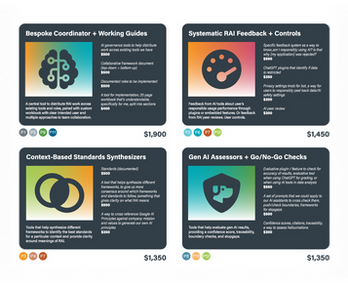

“Responsible AI requirements” checklist for product/design

Timeline

-

Week 1: framing + baseline evaluation + flow definition

-

Week 2: prototype design + content + AI interaction patterns

-

Week 3: quant usability test + analysis

-

Week 4: iteration + final narrative + recommendations

ACTION 1

Baseline evaluation revealed that "insight without clarity" and "action without confidence" are the biggest drop-off points.

Baseline evaluation (heuristics + competitive scan) → Insight X

Key insight 1: Most tools explain what happened, but not why in a way users can verify.

-

Users need evidence-based explanations (show the transactions behind the claim).

-

Without evidence, AI feels “magical,” which reduces trust.

Key insight 2: The jump from insight to action is too heavy.

-

“Set a budget” is cognitively expensive; users need one small next step with defaults.

-

Neurospicy users benefit from “minimum viable action” plus an optional deeper dive.

Key insight 3: Categorization errors create outsized frustration.

-

A single wrong category can distort the entire story of the month.

-

Fixing it must be fast, reversible, and teach the system.

Key insight 4: Tone is a usability feature.

-

Judgmental language increases avoidance; neutral, supportive language increases follow-through.

Tasks in order of average difficulty rating (for quant usability test)

(1 = easiest; 5 = hardest. Adjust after pilot.)

-

Find the biggest spending change this month (Avg difficulty target: 1.8/5)

-

Explain a spike and identify the evidence behind it (Avg difficulty target: 2.3/5)

-

Correct a miscategorized transaction and confirm the month summary updates (Avg difficulty target: 2.7/5)

-

Choose a “next best action” and customize it (Avg difficulty target: 3.2/5)

-

Set a soft limit + configure an accountability nudge (Avg difficulty target: 3.8/5)

-

Resolve a conflict: AI suggestion doesn’t fit user reality (edit/reject/alternative) (Avg difficulty target: 4.3/5)

ACTION 2

Based on findings, I recommended a "Clarity Coach" flow built on evidence, agency, and one-tap next steps.

Prioritized recommendations

-

Create a single “Monthly Clarity” entry point with 3 questions: What changed? Why? What’s next?

-

Require evidence for every insight (“Based on these transactions…”) and let users drill down.

-

Add uncertainty cues + assumptions (“I might be wrong if…”), plus a one-tap “Fix this” path.

-

Design for user control: every recommendation is editable, dismissible, and offers alternatives.

-

Default to one small action (Minimum Viable Habit) with optional “level up” actions for motivated users.

-

Use non-judgmental language and avoid shame triggers; treat behavior as data, not morality.

-

Accessibility-first UI: scannable hierarchy, reduced clutter, consistent iconography, clear labels, keyboard/focus states, readable contrast.

-

Privacy-forward messaging + settings: clear explanation of what’s analyzed, what’s stored, and opt-out controls.

RESULT

Prototype testing showed improved clarity and control for key money tasks (quant usability metrics)

-

Study type: Unmoderated prototype usability test

-

Participants: n = ___ (target 20–30)

-

Task success improved on core tasks (e.g., “explain spike,” “choose next action”) from __% → __%

-

Median time-to-interpretation reduced from __ sec → __ sec

-

Average confidence increased from __/5 → __/5

-

SUS score: __ / 100

-

“I feel in control of AI suggestions” agreement: __%

RELEVANCE

This addresses a real market gap: young adults want financial literacy tools that reduce overwhelm without sacrificing trust.

-

Market/behavior reality: Many budgeting tools fail because they demand sustained attention and complex setup; low-friction habit loops are a retention advantage.

-

Product strategy value: “Clarity → Next Step” creates a repeatable weekly/monthly ritual that can increase activation and retention (and premium conversion in a paid model).

-

Responsible AI differentiation: Evidence-based insights + user control reduces risk of harmful or opaque recommendations—especially important in sensitive money contexts.

-

Bigger-world problem: Financial stress compounds mental load; helping neurospicy young adults build money confidence supports economic resilience and wellbeing.

EVOLUTION

Next time, I'd tighten the experiment design, broaden accessibility validation, and test habit outcomes.

1) I’d run a pilot to calibrate task difficulty and success criteria

Pilot with 5 users to refine wording, eliminate ambiguous tasks, and set realistic benchmarks.

2) I’d add a stronger comparison condition

Compare Baseline monthly review vs Clarity Coach to quantify uplift more cleanly (A/B prototype design).

3) I’d test habit formation signals over time

Add a lightweight longitudinal component (1–2 weeks) to measure: revisit rate, action completion, and perceived behavior change.

4) I’d deepen accessibility testing with assistive tech

Validate with screen readers + keyboard-only navigation + reduced motion settings; recruit at least a few users who rely on assistive tech.

5) I’d stress-test Responsible AI failure modes

Simulate wrong categorizations, incomplete data, and conflicting signals to validate recovery UX (“catch, correct, learn”).

Next Steps

-

Finalize prototype v2 based on test results

-

Define analytics instrumentation plan (activation funnel, retention cohort, suggestion acceptance/rejection, error correction rates)

-

Build a “Responsible AI product spec” page: risks, mitigations, thresholds, and escalation patterns